Intro to Recursive SNARKs

To understand what recursion is, you must first understand recursion.

Background

The popularity of Zero-Knowledge Proofs (ZKPs) has grown in recent years due to several factors, including advancements in cryptographic research (eg. post-quantum cryptography), increasing demand for privacy and security, and their applicability to solve a variety of challenges such as scalability (see ZK rollups).

This is the result of various converging developments such as the introduction of ZK-SNARK setup in 2011 demonstrating the construction of succinct and non-interactive proofs, which are generated in a way that is more efficient and scalable.

(Here is an real world application example.)

As shown in chart, much of the development in zero-knowledge proof takes the zk-SNARK proof construction as it is considered more efficient and faster by their proponents as innovations were made in proof creation and verification (see PLONK and Marlin).

Furthermore, weaknesses in zk-SNARK setup are also being addressed, namely in the need of having a trusted setup. (See Sonic, Supersonic)

Motivation

With traditional SNARK setups, there are various trade-offs that should be considered, namely that of proof size and prover time.

The proof size refers to the amount of data that is required to represent the proof. A smaller proof size is desirable because it reduces the amount of data that needs to be transmitted between the prover and verifier and reduces the amount of storage required to store the proof. However, smaller proof sizes often come at the cost of longer prover times.

This trade-off arises because the proof generation process involves performing a series of computations to transform the input data into a proof. To generate a smaller proof size, the prover needs to perform more efficient computations that produce fewer intermediate values.

Proof Generation

Some of the immediate values in proof generation include:

Public parameters used to construct the elliptic curve and other cryptographic primitives used in the proof generation process. Public parameters are generated during the trusted setup phase and are publicly known.

Random values generated used in the proof generation process: Random values are used to add randomness to the proof and make it more secure such as in polynomial commitment schemes, homomorphic encryption, random challenges from the verifier, blinding factors.

Witnesses: These are the inputs to the computation being proven, such as the values of variables in a circuit. Witnesses are used to compute the constraints that are used in the proof generation process.

Committments: These are values that commit to certain intermediate values in the proof generation process. Commitments are used to hide the values of certain variables and make the proof more secure.

Final proof values: These are the values that are included in the final proof that is sent to the verifier. Final proof values are computed using the intermediate values generated during the proof generation process.

Prover Time

There is a trade off between concise proof generation and shorter prover time for the include some of the following reasons:

Zero-knowledge proof systems with smaller proof sizes often require additional computations to ensure that the proof is still valid despite the reduced amount of information. For example, in ZK-SNARK systems that use elliptic curve cryptography, such as the popular implementation used in the Zcash, the prover may need to perform additional pairing computations to generate the proof.

Recursive proof systems use a series of nested proofs to verify computations, and as the proof size decreases, the number of nested proofs required to validate the computation can increase, leading to longer prover times.

Public parameters are generated in advance and must be verified by the prover during the proof generation process. (See ZCash’s public parameters here)

Recursive SNARKs seek to address this trade-off.

Recursive SNARKs

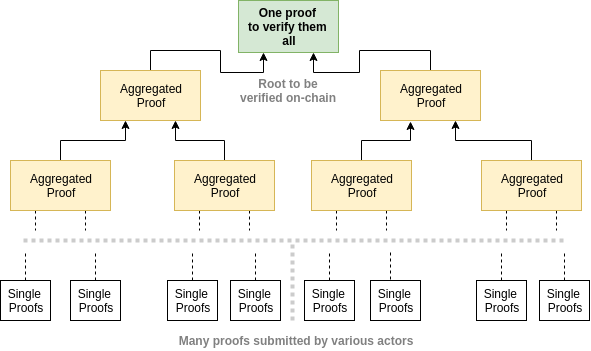

In a recursive SNARK, a proof is generated for each layer of computation or recursion, and then these proofs are recursively composed to generate a proof for the entire computation. This allows for efficient verification of computations that involve a large number of recursive or nested operations, such as computations involving Merkle trees, graph computations, and blockchains.

(See Mina protocol’s implementation here)

How it works

The recursive composition of proofs involves three main steps:

Proving the correctness of the computation for each layer or level.

Composing the SNARKs recursively to generate a proof for the entire computation.

Verifying the proof using the verification algorithm. (eg. Groth16, BCTV14, Pinocchio, PLONK, Aurora)

The recursive composition of SNARKs can be done in various ways, such as through the use of recursive proofs (eg. Marlin, Sonic, Fractal, Hyrax).

To generate a recursive SNARK proof, the computation is divided into multiple layers or levels, each of which is proven using a SNARK. The output of each layer is used as the input to the next layer, and so on, until the final output is generated.

Each SNARK proof is generated using a set of public parameters (See ZCash’s public parameters here) that are generated during the trusted setup phase. These parameters are publicly known through a trusted setup and are used to ensure the security of the proof through generating key pair (see proving and verifying key).

The recursive composition of SNARKs allows for the efficient verification of computations that involve multiple layers of recursion or nesting. The composition of the proofs allows for the efficient verification of computations that would otherwise be too complex or time-consuming to verify using traditional ZK-SNARK systems.

Use Cases

Streaming Proof Generation

This is a technique used to generate proofs for data batches.

This means that the prover can generate the proof as the computation is being performed, rather than waiting for the entire computation to complete before generating the proof. This technique is useful in applications where the computation is too large to fit in memory or where the computation is performed in a distributed or parallel fashion.

Streaming Proof Generation involves breaking the computation into smaller chunks, called batches, and generating proofs for each batch as the computation progresses. Each batch is verified before the next batch is generated, ensuring that the computation is correct at each stage. The proofs for each batch are combined to generate the final proof for the entire computation.

The key advantage of Streaming Proof Generation is that it allows for the generation of proofs for computations that are too large to fit in memory. This is achieved by breaking the computation into smaller batches that can be processed and verified independently. Additionally, Streaming Proof Generation can be used in distributed or parallel computations, where each node generates a proof for its part of the computation and the proofs are combined to generate the final proof.

Incrementally Verifiable Computation (IVC)

IVC involves breaking the computation into smaller chunks, called steps, and verifying each step as it is performed. Each step generates a proof that is verified before the next step is performed, ensuring that the computation is correct at each stage. The proofs for each step are combined to generate the final proof for the entire computation.

The key advantage of IVC is that it allows for the verification of computations that are too large to fit in memory. This is achieved by breaking the computation into smaller steps that can be processed and verified independently. Additionally, IVC can be used in distributed or parallel computations, where each node performs its part of the computation and the proofs are combined to generate the final proof.

IVC is particularly useful in applications where the computation is performed in a streaming or online fashion, such as in the case of real-time data processing or sensor networks. In these applications, the computation is performed on a continuous stream of data, and the verification of the computation must also be performed in real-time.

Final Thoughts

Using recursive SNARK setup allows for the possibility of having intermediate steps allowing developers to use algorithms that may be beneficial for a specific process.

For example, it is possible to use algorithms that generate longer proofs but faster verification time in certain steps and vice-versa for others.

This allows developers to have more degree of freedom in their SNARK setups.

Additionally, improvements such as folding schemes (eg. Nova) are implemented to reduce computation complexity and enhance compression allowing for more practical usage of such setup.